2026-06-09

Where Can I Get Hired at 14? Legal Jobs for 14 and 15 Year Olds

2026-06-09

The Teacher Aide Resume Example That Wins Interviews

2026-06-09

How New Graduates Can Set Professional Boundaries and Protect Themselves at Work

2026-06-08

The Link Between Office Comfort and Employee Engagement

2026-06-08

The Starbucks Barista Resume and Job Description Guide

2026-06-08

The Screenwriter Resume That Actually Lands Meetings

2026-06-08

Top 8 Learning Management Systems for Employee Training and Upskilling

2026-06-07

11 Job Interview Weaknesses Backed by Credible Evidence

2026-06-04

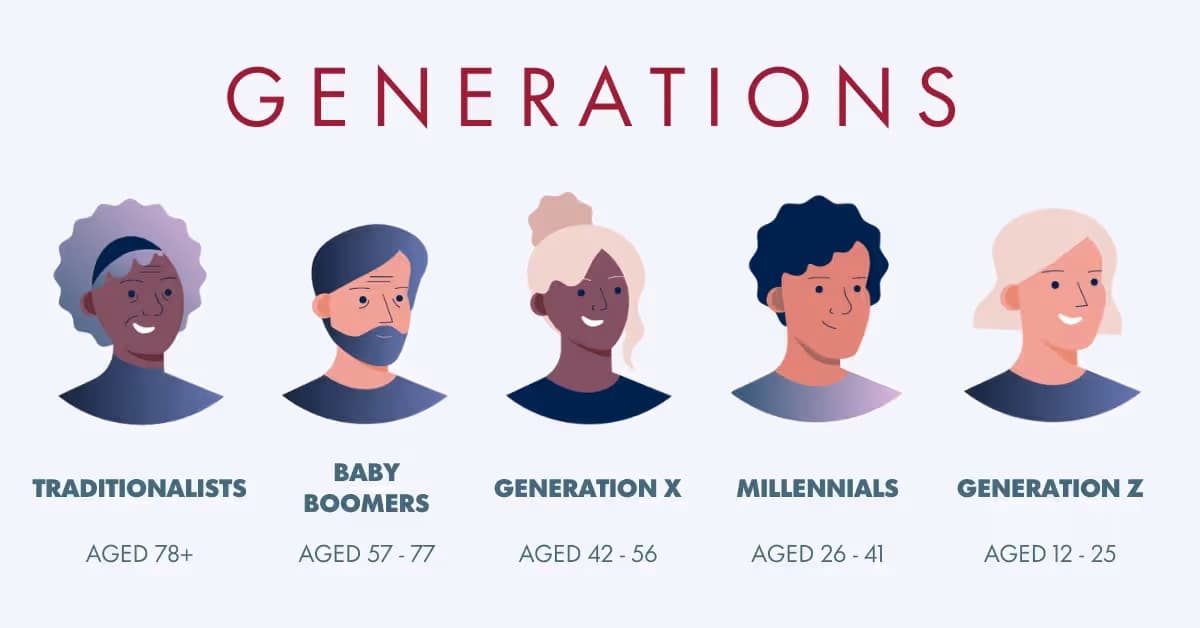

Millennials vs Gen Z at Work: What the Evidence Says

2026-06-02

Millennials Are What Years?

2026-06-01

Generational Work Differences: What the Evidence Says

2026-06-01